MiND @ NIME – Premier Performance // Thought.Projection

The MiND Ensemble will be premiering our latest work at the international conference on New Interfaces for Musical Expression.

What: Thought.Projection – The human form becomes a dynamic musical score, as parameters derived from EEG data are utilized to generate visuals and manipulate sound in real-time. This dynamic duet for the cello and mind explores notions of this Featuring cello performance from Jeremy Crosmer and musical/visual material developed by the MiND Ensemble.

When: Tuesday May 22nd, 7pm.

Where: The Mendelssohn Theatre, University of Michigan Central Campus.

10$ General Admission / 5$ with student ID

Using the EPOC Headset

Design Lab 1 now has two fully functional EPOC units. Swing by any time and talk to the consultants about making a reservation. Here’s a short instructional video to help get you started. Even if you’ve used the device before, you may pick up a few new tips. Happy hacking!

Fun With Data: Listening to the Brain

In this video we’re listening to raw brainwave data that has been translated into sound and time-stretched to match the original length of the video clip (shot with a cell-phone). We’ve uploaded the original data file from from the EPOC in three formats – Excel, .txt, and CSV – along with the matlab algorithm we used to write the data as an audio file. If you’re interested in messing with the audio track, here’s the original audification, and here’s the time-stretched audio from the video clip. This audio was generated from a single column of data, and this is just one example of how data from the EPOC can be translated into sound… if you haven’t had a chance to get your hands on the device, feel free to download these files and play around!

Analysis of the Audio:

You’ll hear a hum enter at around 6 seconds, and leave around 17 seconds, which corresponds to a 10 second period when Steve closed his eyes. Going one step further… we can use a tone generator to approximate the frequency of this hum to 469hz with a +/- 10hz accuracy (when the file is played back at 1/8 the original speed). We can then calculate that 469hz at 1/8th the original playback speed (44,100 samples per second) corresponds to a periodicity of ~11.85 samples in the original data. This translates to a ~10.79hz periodicity in the original data file, as the data sampling rate of the EPOC is 128 samples/second…

“Hans Berger made many discoveries from the EEG recording and he found that the brain oscillate at about 10 cycles per second when the eyes are closed and relaxed and he called them alpha waves.”

Source: The Brain & the Mind. Psychology Volume 2. 2002: 97.

…and that’s how we can utilized auditory data analysis to confirm the existence of Alpha Waves!

Inside the MiND Ensemble

Here’s a short documentary produced by Suby Raman, a PhD student in music composition and member of the ensemble:

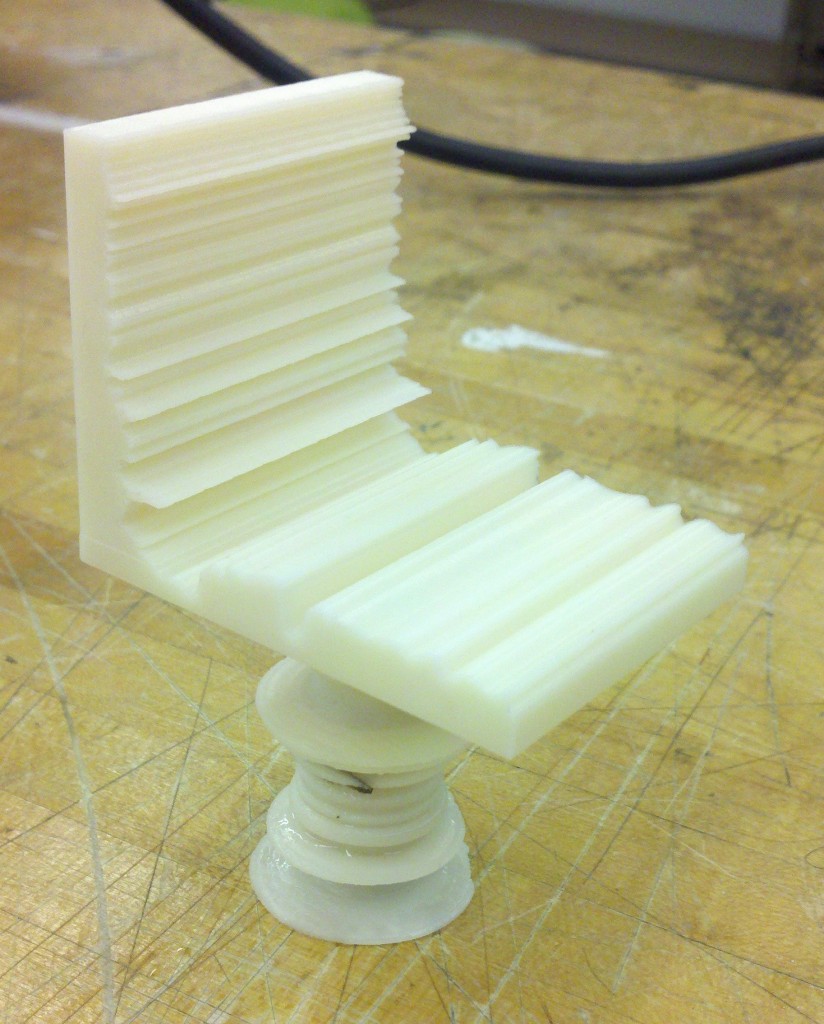

The MiND Chair

Anton Pugh, a M.A. student in Electrical Engineering and member of the MiND Ensemble, has found a unique way to transform his thoughts into reality with the Emotiv EPOC. He’s shared his creative process and source files with the community in hopes that others will build off his ideas. Design Lab 1 now has two fully function EPOC units available upon request.

Concept

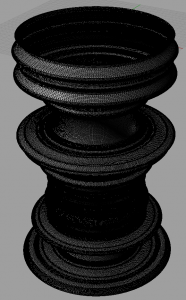

I wanted to use EEG (electroencephalogram) signals to produce something tangible. I decided to use a familiar object (a chair), and somehow manipulate it with brain data. I thought an interesting application would be the brain’s reaction to music.

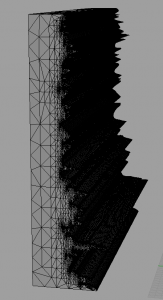

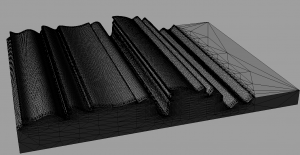

I used a very basic chair with one leg, a seat and a back. The way we interact with chairs is often taken for granted, however the three components of this chair capture three very important aspects of sitting. First, we have the seat, which provides us with support in the many positions we choose to sit in. I used the meditation parameter of my brain waves to shape the seat because underneath everything happening in our brains, meditation is always supporting our thoughts. Second, the back, which we interact with differently depending on our mood and attention. I used the excitement parameter of my brain waves to shape the back because our interaction with the back of a chair is usually the most dynamic. Finally, the leg, which connects the chair to the ground. I used the engagement/boredom parameter of my brain waves to shape the leg because, in a sense, our engagement/boredom determines how present we are in reality.

Technical Description

There are several tools that are readily available for interfacing the Emotiv EPOC with various other hardware. In particular, Mind Your OSC’s translates the brain data to OSC (Open Sound Control) data, which can be interfaced with a variety of musical hardware, as well as processing.

In order to use Mind Your OSC’s I needed to download the Emotiv EPOC SDK, as Mind Your OSC’s gets the data from the Emotiv Control Panel included in the SDK. To get the data into processing I used the oscP5 library for processing by modifying an example project by Joshua Madara.

I collected data while listening to “Did You See The Words?” by Animal Collective, and once the data had been collected, I used 3D modeling software called Rhino to build the chair. The 3D printers in the UM3D lab require STL files as input, which is one of the basic export options in Rhino.

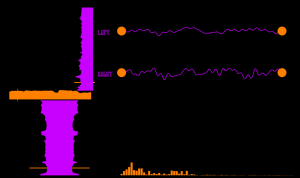

Finally, I created a Processing sketch which plays the song I listened to and shows the point on each piece of the chair at which my brain data is shaping it. For reference, I also used the Minim library for audio analysis and synthesis to display the frequency spectrum and the samples of the song that are playing at any given time.

Result

Overall, I am very pleased with the results. The sculpture alone does not evoke many emotions and really just looks like an uncomfortable chair. However, with the visual aid processing sketch, much more light is shed on what happened during my listening to the song. If this were to be an installment, I would probably embed the visual aid in a web page, so as people view the piece they can view the aid on their smartphone.

STL Files are approximately 87 MB (so my upload allotment is not sufficient), but I will provide them upon request.

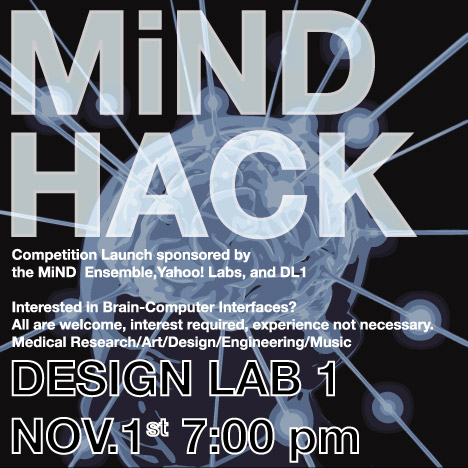

Competition Launch!

Announcing MiND Hack: A design competition challenging teams at the University of Michigan to explore the frontiers of Brain Computer Interface (BCI) technology. The Music in Neural Dimensions Ensemble is officially inviting students to explore new frontiers in BCI technology, which will be made available through the Design Lab in the Duderstadt Center. Interdisciplinary teams will have until the end of the Winter semester to develop their projects, at which time they will be presented to a panel of judges, and prizes will be awarded in three categories:

$500 – Creative Implementation: Best use of brainwave technology to generate new art / music.

$500 – Software Design: Best use of brainwave technology to create a new game, or educational tool.

$500 – Accessibility: Best use of brainwave technology to enable a new form of interaction.

–

Register at the following link: http://bit.ly/rsmlEQ

–

Equipment will be available through the Design Lab Checkout system, and free workshop events will be conducted by members of the MiND ensemble throughout the year (individual consulting will be available upon request). Individuals interested in registering will have a chance to form teams at the launch event, and can also email themindensemble at gmail.com.

MiND on Vimeo

The 2011 performance is now available on Vimeo. Thanks to everyone who helped make this event successful!

Up and Running

The website is currently under construction. We’ll use this space to provide details for upcoming performances, and content from our projects as they evolve. Check back soon!